Instrumentation or Death: Mastering Gemini CLI in an Android Project

Generating code has become too easy.

I had a telling experience recently: in a pet project, I tried "vibe-coding" with almost no review—following the principle of "whatever the agent writes, we accept." At first, it felt fast and convenient. But very quickly, the project started falling apart: the code became bloated and unmaintainable, core functionality began to break, and I saw latencies, memory leaks, and a whole range of issues that usually take months to accumulate—here, they appeared almost instantly.

At some point, it became obvious: the problem isn't that the agent writes "bad" code. The problem is the speed at which this code accumulates without oversight.

What Breaks with This Approach

When you remove manual control, typical patterns start to emerge:

- Bypassing architectural layers;

- Logic duplication;

- Blurred module boundaries;

- Random dependencies;

- Unnecessary code complexity;

- Gradual performance degradation.

Crucially, this doesn't happen all at once. Each individual step looks "fine," but collectively, the system quickly spirals out of control.

Why a Single Prompt Wasn't Enough

My first attempt was the obvious one—I put together a massive rules.md where I defined:

- Architectural constraints;

- Naming conventions;

- Layering rules;

- Local project agreements.

This helped partially, but it didn't solve the problem.

In practice, I found that:

- Long context windows are unstable;

- Some rules get ignored over time;

- The model doesn't always apply constraints consistently;

- Cost and response time grow with the prompt size.

I eventually reached a more pragmatic conclusion: Important rules must be enforced, not just described.

1. Konsist: Architecture as an Executable Contract

An agent, by default, takes the path of least resistance. If it can bypass a layer to get the job done—it will.

To restrict this, I started defining the architecture through tests using Konsist—a tool for verifying Kotlin code architectural rules via unit tests.

Example:

@Test

fun `use cases should have UseCase suffix and reside in domain package`() {

val classes = Konsist.scopeFromProject().classes()

val violations = classes

.filter { classDeclaration ->

classDeclaration.name?.endsWith("UseCase") != true ||

!classDeclaration.resideInPackage("com.core.domain")

}

if (violations.isNotEmpty()) {

val message = buildString {

appendLine("VIOLATION: UseCase naming conventions not followed")

appendLine("FIX: Rename class to end with 'UseCase'")

appendLine("FIX: Move class to com.core.domain package")

}

fail(message)

}

}

Two things are critical here:

- The check catches the violation;

- The error message gives clear instructions on how to fix it.

The agent no longer works "in a vacuum" but within a tight feedback loop.

2. Log Compression: Less Noise, Faster Iterations

One major issue I encountered was log volume.

If you feed the agent the full output from Gradle or JUnit, it gets lost in the data. The context gets filled with noise rather than signal.

So, I built a simple compression layer:

- Keep only failed tests;

- Extract short error messages;

- Limit stack traces;

- Strip everything else.

Example:

def parse_xml_reports(root_dir):

summary = []

for testcase in root.findall(".//testcase"):

failure = testcase.find("failure")

if failure is not None:

message = failure.get("message", "No message")

text = failure.text or ""

stacktrace = "\n".join(text.strip().split("\n")[:15])

summary.append(f"FAILED: {testcase.get('name')}")

summary.append(f"Message: {message}")

summary.append(f"Stacktrace:\n{stacktrace}\n")

return summary

After implementing this, the "break → fix" cycle became significantly faster and more predictable.

3. Spotless and detekt: Basic Hygiene Without Human Intervention

The next layer is automated quality checks:

- Spotless: A versatile tool for automated code formatting;

- detekt: A static analyzer for finding potential issues and complex constructs in Kotlin.

I stopped seeing these as "extra tools." They are simple parts of the process now.

If the code doesn't pass these checks, it's not finished. The agent returns and fixes it automatically.

This eliminates:

- Nitpicks during review;

- Arguments over style;

- Gradual readability degradation.

4. Translations: Eliminating Mechanical Errors

strings.xml turned out to be an unexpected pain point.

LLMs regularly make mistakes with:

- Apostrophes;

- Quotes;

- Escape sequences.

I didn't try to "teach" the model to handle these via text. It was easier to add:

- Validation;

- Automated fixes;

- XML parsing logic.

Important: no "brute force" global replacements—only targeted fixes.

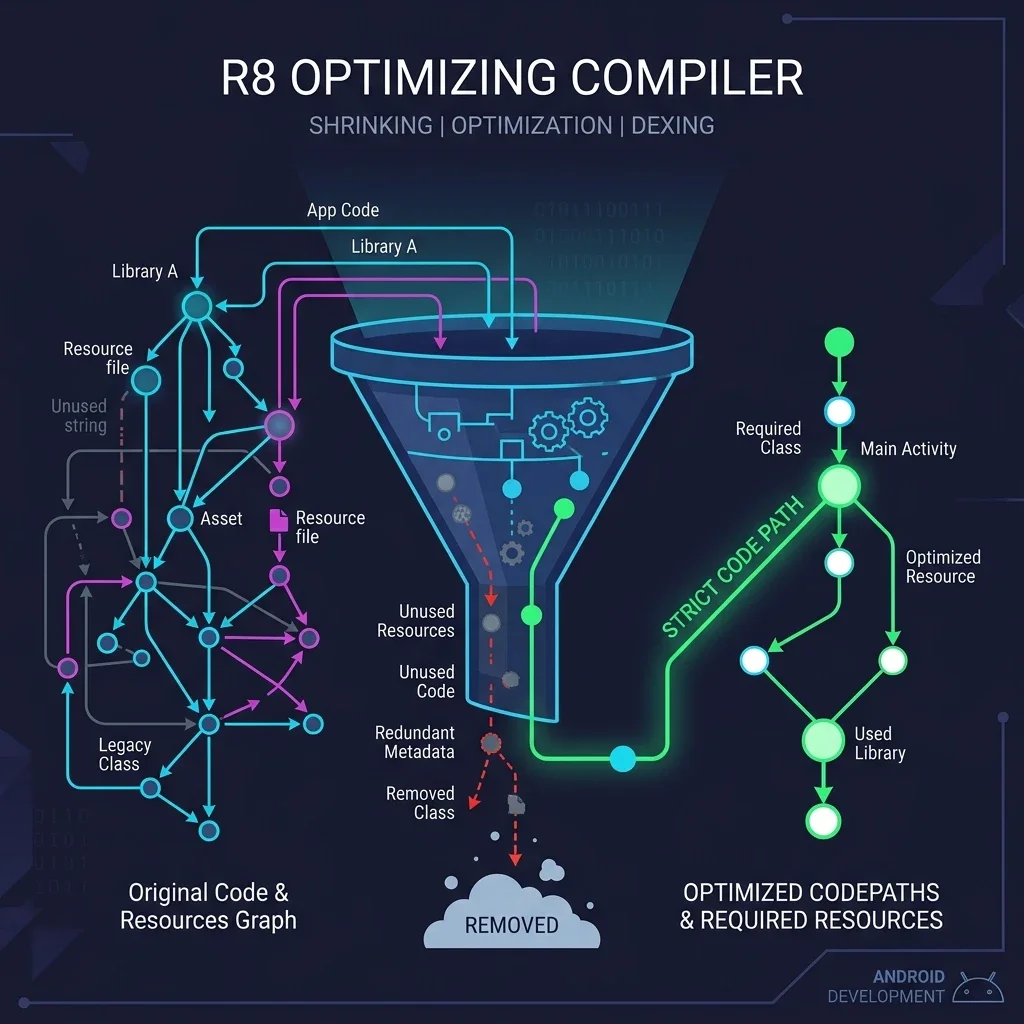

5. Performance and Binary Size

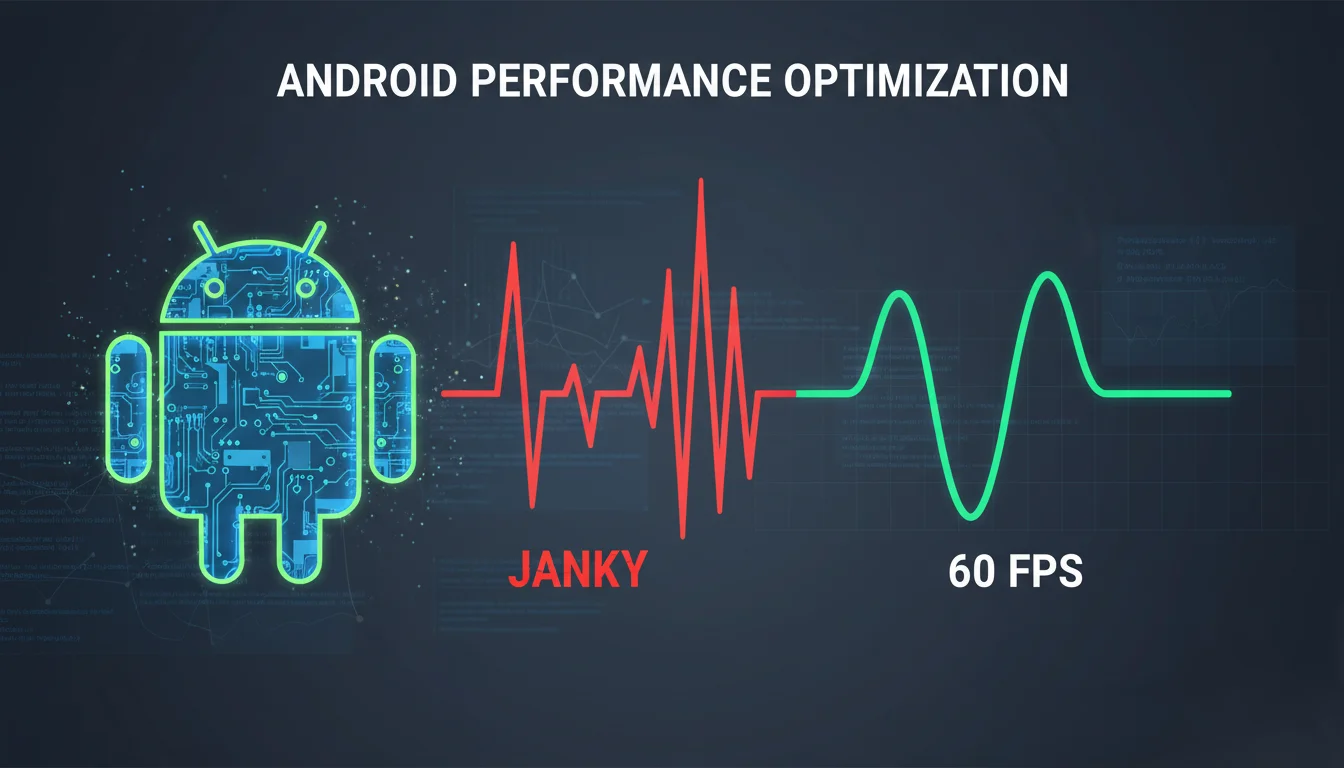

Another effect that appeared over time was "silent" product degradation.

An agent might solve a task but:

- Add a heavy dependency;

- Over-complicate the code;

- Increase the build size;

- Impact runtime performance.

To track this, I added:

- Baseline benchmarks;

- Build size monitoring.

This doesn't provide 100% guarantee, but it allows you to spot deviations early.

6. Pre-commit Hooks: The Fast Filter (Mercurial/hg or Git)

I moved some of the checks into pre-commit hooks:

- Auto-formatting;

- Targeted linting;

- Architectural checks for critical modules.

The goal isn't to "ban everything," but to:

- Catch basic issues quickly;

- Keep them out of the repository;

- Reduce noise during the actual review.

Why It Works

The essence of development hasn't changed. Tests, linters, and architectural constraints have always been best practices.

What has changed is: the speed at which code is generated.

When changes are generated rapidly and in large volumes, manual oversight doesn't scale. What you used to "catch with your eyes" simply passes by too fast to process now.

In this context, automated checks stop being a "good practice" and become a fundamental necessity—simply to keep the system in a stable state.

Summary

I don't see this approach as universal or mandatory for everyone.

But in my case, it had a tangible effect—primarily by reducing cognitive load.

I no longer need to:

- Keep every constraint in my head;

- Manually read through every single change;

- Sort through massive logs;

- Constantly re-verify basic things.

The system handles it, and I step in only where it actually makes sense.

From the practical benefits I've noted:

- More stable iterations without random regressions;

- Predictable agent behavior via feedback loops;

- Faster fix cycles;

- Less noise during review;

- Easier to scale agent usage.

It doesn't solve every problem, but it makes working with agents significantly more manageable—at least in my experience.

Useful Links

- Konsist: Current documentation for Kotlin architectural linting.

- Spotless: A tool for automated code formatting.

- detekt: A static code analyzer for Kotlin.

- Mercurial (hg): A version control system.

- Maestro: A UI testing automation platform for mobile apps.

In the next article, I'll dive into how I added automated UI scenario walkthroughs and interface verification using MCP Mobile and Maestro (a framework for simple and declarative UI testing of mobile apps).